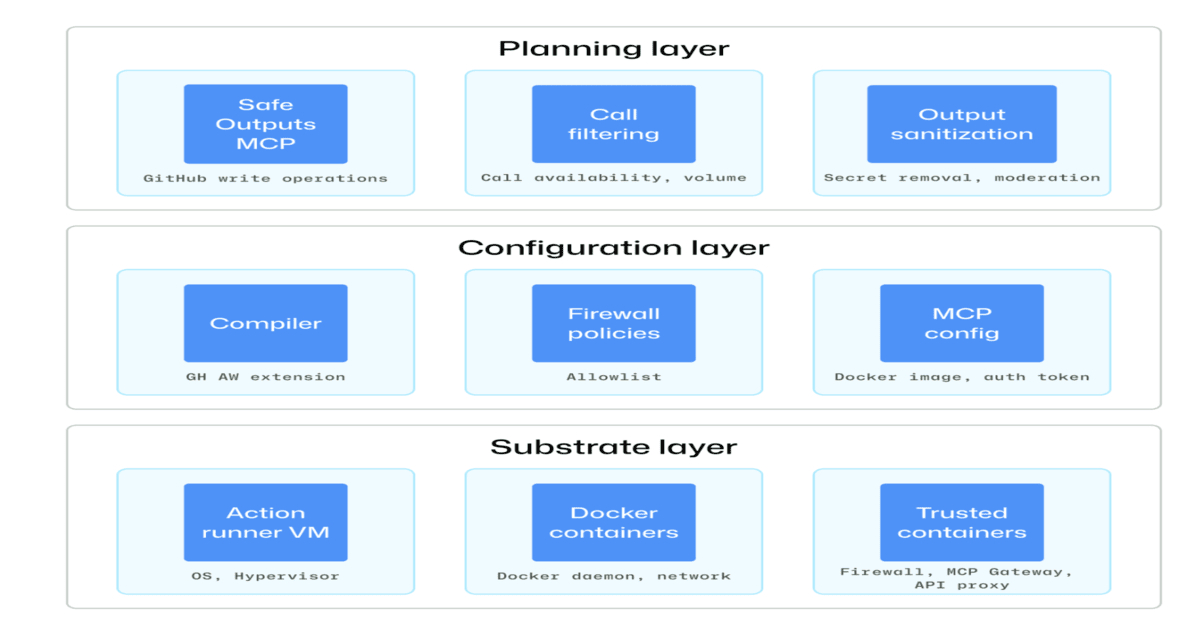

Modern CI/CD pipelines are increasingly integrating autonomous AI agents to automate complex tasks, but this introduces new security challenges. GitHub has proposed a comprehensive defense-in-depth architecture designed to safely incorporate agentic workflows. This approach emphasizes strong isolation, constrained execution environments, and full audit trails to mitigate risks like prompt injection, privilege escalation, and unintended actions. Below, we answer key questions about this security framework.

What are the primary security risks associated with agentic workflows in CI/CD?

Agentic workflows in CI/CD pipelines can automate repetitive tasks, make decisions, and interact with external services. However, these autonomous agents introduce several vulnerabilities. The most critical risks include prompt injection, where malicious inputs manipulate an AI agent's behavior; privilege escalation, where an agent exploits excessive permissions to access sensitive resources; and unintended actions, where an agent performs harmful operations due to ambiguous or incomplete instructions. Additionally, agents might leak confidential data through their outputs or become compromised via supply chain attacks. Without proper safeguards, these issues can lead to unauthorized code changes, deployment of vulnerable software, or exposure of secrets. GitHub's design specifically targets these threats by enforcing strict boundaries on agent capabilities and ensuring every action is recorded for later review.

How does GitHub propose to isolate AI agents from critical systems?

Isolation is a cornerstone of GitHub's security architecture for agentic workflows. The approach uses sandboxed environments that are ephemeral and fully contained. Each agent runs in its own isolated container or virtual machine, with no direct network access to production systems or internal services. These sandboxes are configured with minimal dependencies—only the libraries and tools explicitly required for the agent's task are available. Furthermore, file system access is restricted to a temporary workspace that is destroyed after the workflow completes. This prevents an agent from leaking secrets or modifying persistent data. By applying the principle of least privilege at the operating system level, GitHub ensures that even if an agent is compromised, the blast radius remains limited to the sandbox. This isolation technique is similar to how CI/CD runners are often sandboxed, but tailored for the unique needs of AI-driven decision-making.

What role does constrained execution play in preventing privilege escalation?

Constrained execution goes beyond basic isolation by tightly controlling what actions an agent can perform. GitHub enforces restricted permissions through fine-grained access control policies. For example, an agent might be allowed to read a specific repository but not write to it, or it may only trigger certain API calls that are idempotent and non-destructive. These permissions are scoped to the agent's identity and cannot be escalated through inheritance or token reuse. Additionally, GitHub recommends using just-in-time (JIT) credential provisioning, where temporary tokens with limited validity are issued for each workflow run. This prevents an agent from holding onto long-lived secrets that could be exfiltrated. By combining role-based access control with dynamic credential management, the architecture reduces the risk of an agent accidentally or maliciously gaining administrative privileges. Regular audits of permission assignments further ensure that agents only retain the minimum access needed for their defined tasks.

How does GitHub ensure auditability and traceability of agent actions?

Full execution traceability is a key requirement for secure agentic workflows. GitHub's architecture mandates that every action taken by an AI agent is logged with detailed metadata: the exact command issued, the timestamp, the source of the prompt, the input and output data, and the decision-making process if available. These logs are stored in an immutable, append-only format within a centralized audit store, making tampering extremely difficult. Additionally, the system supports human-in-the-loop review by providing dashboards that visualize the agent's workflow step by step. Admins can replay actions to verify correctness and detect anomalies. This transparency helps in post-incident investigations and compliance with regulations. By linking agent activity to specific task IDs and execution contexts, GitHub enables rapid root cause analysis if a security incident occurs. The audit trail also serves as a deterrent against insider threats by ensuring accountability.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

How does the architecture protect against prompt injection attacks specifically?

Prompt injection is a top concern for AI agents, where carefully crafted inputs trick the model into overriding its instructions. GitHub's defense-in-depth strategy addresses this at multiple layers. First, all external inputs—such as user commands or data from third-party APIs—are sanitized and validated before being incorporated into the agent's prompt. This includes removing or escaping special characters and limiting input length. Second, agents are designed to operate with a constrained action space: instead of allowing arbitrary commands, the agent selects from a predefined set of approved functions (e.g., "read file", "run test"). This means even if a prompt injection succeeds, the agent cannot execute dangerous operations not in its allowed list. Third, GitHub recommends using output encoding and content filtering to prevent the agent from leaking sensitive information in its responses. Finally, runtime monitoring detects unusual patterns, such as an agent suddenly attempting to delete files, and automatically halts the workflow pending human review.

What are the key recommendations for teams implementing GitHub's security model?

Teams looking to adopt GitHub's defense-in-depth for agentic workflows should start by conducting a thorough threat model specific to their CI/CD environment. They should map out all data flows, third-party integrations, and potential attack vectors. Next, implement least privilege secrets management using vault systems that provide short-lived tokens. For isolation, use container-based sandboxes with no outbound network access except to explicitly allowed services (e.g., artifact repositories). It is also crucial to integrate audit logging from day one and set up alerts for anomalous behavior, such as repeated failed actions or access attempts to restricted resources. Teams should establish a clear escalation path for when an agent triggers a security alert. Finally, regular penetration testing and red team exercises focused on AI agent interactions will help identify gaps. GitHub's architecture is a robust starting point, but each organization must adapt it to their specific risk profile and compliance requirements.