Managing secrets at scale in Kubernetes environments presents significant security and operational challenges, especially for platform teams balancing governance with developer velocity. While native Kubernetes Secrets exist, they fall short of enterprise lifecycle requirements. This Q&A explores the best practices for integrating HashiCorp Vault with Kubernetes, focusing on why the Vault Secrets Operator (VSO) has become the recommended standard for automated, secure secret delivery without disrupting existing workflows.

What are the primary challenges platform teams face when managing secrets in Kubernetes?

Platform teams often discover a massive security gap when scaling Kubernetes environments. Reliably managing security controls for secrets—especially across multiple clusters and hybrid clouds—becomes daunting without slowing down development. The core challenge shifts from simply getting a secret into a pod to managing the entire secret lifecycle: generation, injection, rotation, revocation, and auditing. As environments grow, developers need speed, while security requires strict governance. Native Kubernetes Secrets are not designed to meet these enterprise governance needs, leading to risks of exposure, stale credentials, and compliance failures. Teams must balance delivering secrets to workloads quickly while ensuring they are centrally managed, versioned, and rotated automatically. This is particularly acute in OpenShift and other enterprise Kubernetes distributions where barebones Kubernetes shares similar gaps.

Why are native Kubernetes Secrets insufficient for enterprise-grade secret management?

Native Kubernetes Secrets are base64-encoded (not encrypted) by default and lack robust lifecycle features like automatic rotation, revocation, or audit trails. They are stored in etcd, which is often not encrypted at rest unless configured. Moreover, these secrets are tightly coupled to the cluster and cannot easily be shared with external systems or managed centrally across multiple clusters and clouds. Enterprises need a centralized, platform-agnostic secret store that enforces policies, provides access control, and supports dynamic secrets and lease management. Vault solves these gaps by acting as a single source of truth. However, even with Vault, integrating it into Kubernetes requires careful design to avoid operational overhead. Native secrets can still be used but often need enhancement through external operators or sidecar injectors to meet enterprise security and compliance requirements.

What are the main methods for integrating Vault with Kubernetes or OpenShift?

Several integration patterns exist, each with distinct tradeoffs. The Vault agent sidecar injector was historically the first robust solution—it runs a Vault agent as a sidecar that retrieves secrets from Vault and writes them to a shared volume. The Secrets Store CSI driver (SSCSI) mounts secrets as volumes using a CSI driver that can pull from Vault or other providers. Third-party secret operators (like external-secrets) abstract the source and sync secrets to Kubernetes Secrets. The newest and recommended approach is the Vault Secrets Operator (VSO), a Kubernetes-native operator that synchronizes secrets from Vault into Kubernetes Secrets (or directly into pods). VSO also offers a built-in CSI companion driver for protected secrets. Each method varies in terms of security, performance, complexity, and how developers interact with secrets inside their pods. Choosing the right one depends on your organization's operational maturity and security requirements.

What is the Vault Secrets Operator (VSO) and why is it now the recommended standard?

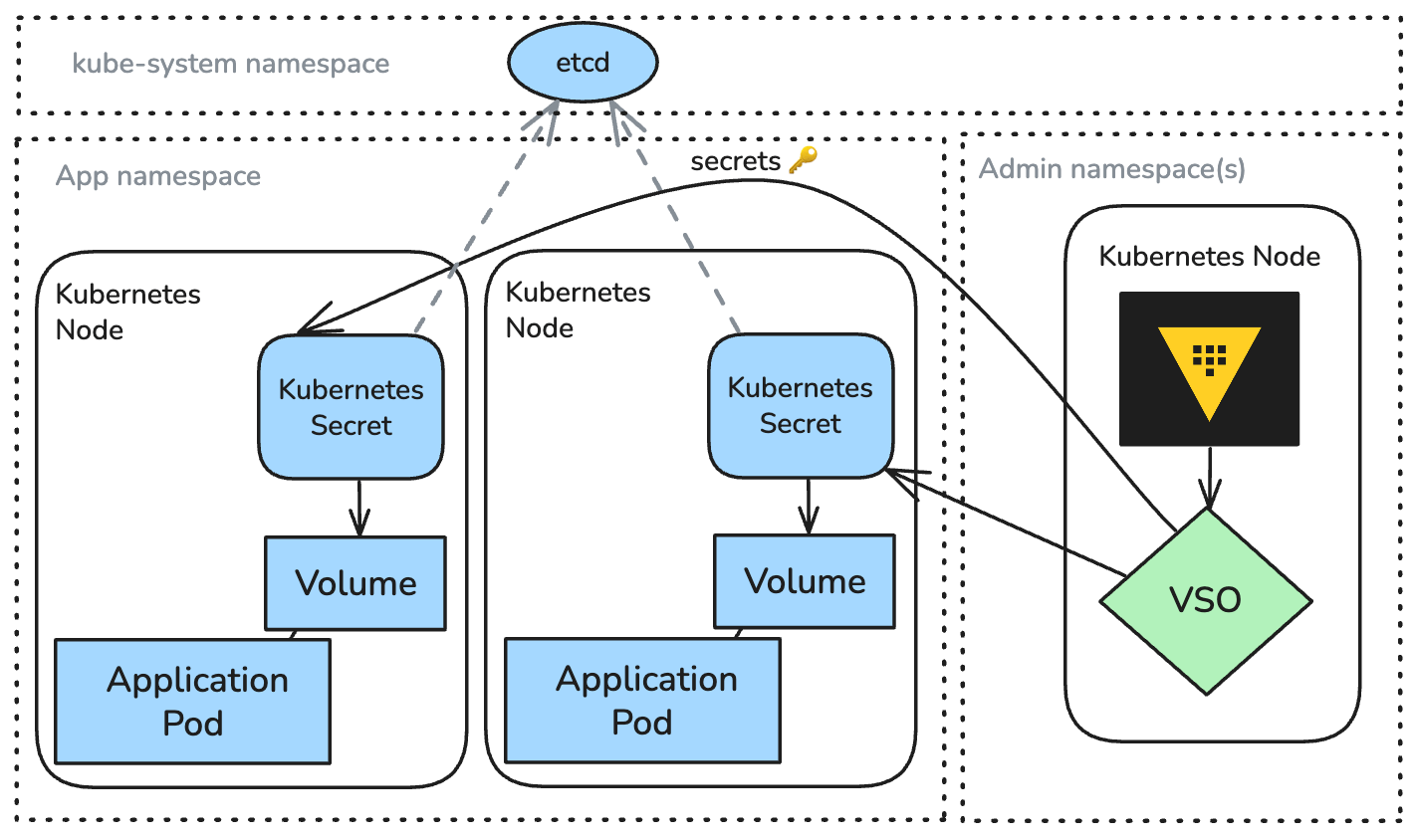

The Vault Secrets Operator (VSO) is a Kubernetes-native operator developed by HashiCorp that automates the synchronization of secrets from Vault into Kubernetes Secrets. It manages the entire secret lifecycle: it can generate, rotate, and revoke secrets based on Vault policies and schedules. VSO uses the Kubernetes operator pattern, which makes it declarative and easy to integrate with GitOps workflows. It supports both static secrets (like database credentials) and dynamic secrets (like temporary API tokens) from Vault. Compared to older methods like the Vault sidecar injector, VSO offers lower resource overhead, better performance at scale, and does not require modifying application pods or forcing admission webhooks. It also works seamlessly with OpenShift. As the partnership between HashiCorp and Red Hat (via IBM) deepened, VSO has become the preferred standard because it delivers enterprise-grade lifecycle automation while preserving the developer experience—developers interact with Kubernetes Secrets as before, but those secrets are now backed by Vault's security model.

How does VSO compare to the Vault agent sidecar injector?

The Vault agent sidecar injector was one of the first robust solutions to deliver Vault secrets into Kubernetes pods. It works by injecting a Vault Agent sidecar container that authenticates with Vault, retrieves secrets, and writes them to a shared emptyDir volume. While effective, it has several tradeoffs. First, it adds resource overhead because each pod runs an extra Vault Agent instance. Second, it requires a MutatingAdmissionWebhook to inject the sidecar, which can complicate deployment and affect other admission plugins. Third, secret updates require pod restarts or manual signals. In contrast, VSO is a standalone operator that runs centrally, not per pod. It syncs secrets into Kubernetes Secrets objects, so pods consume them via native mechanisms (environment variables or volume mounts). Updates to secrets in Vault trigger automatic syncs without pod restarts. VSO also scales better across large clusters and reduces attack surface because it does not embed Vault Agent in every pod. For most modern use cases, VSO offers a cleaner, more Kubernetes-native approach.

What are the benefits of using VSO with its built-in CSI companion driver for protected secrets?

For workloads requiring extra isolation—such as PCI-DSS compliance or when secrets must never be written to disk—VSO provides a built-in CSI companion driver called VSO Protected Secrets. This driver mounts secrets directly from Vault into a pod's filesystem using the Container Storage Interface (CSI), without storing them in etcd or as Kubernetes Secrets. The secret data is only present in the pod's memory or ephemeral volume, and automatically revoked when the pod is terminated. This method offers the highest level of security because it eliminates the risk of stale secrets in the cluster's data store. Additionally, it supports in-line health checks to ensure the secret remains fresh throughout the pod's lifetime. The tradeoff is slightly higher setup complexity and dependency on CSI driver availability in the cluster. However, for teams that need to meet rigorous compliance standards, VSO with protected secrets provides a powerful option that balances automation with airtight security.

How does VSO help scale secret lifecycle management without slowing down development?

VSO automates the entire secret lifecycle—generation, rotation, revocation—directly from Vault policies to Kubernetes. This means developers no longer need to manually request secret updates or coordinate with platform teams for rotations. The operator watches Vault paths and updates Kubernetes Secrets automatically when secrets change (based on lease time, refresh intervals, or schedule). It also supports dynamic secrets that expire, ensuring temporary credentials are used. By decoupling secret provisioning from application code, developers can focus on building features while platform teams enforce governance centrally. VSO integrates with GitOps by storing VaultAuth and VaultSecret custom resources in Git, enabling audit trails and reproducibility. Moreover, because VSO does not modify pod specs or require webhooks, it does not slow down pod startup times. This combination of automation, security, and developer experience allows enterprises to scale from a few clusters to dozens without compromising velocity or compliance.